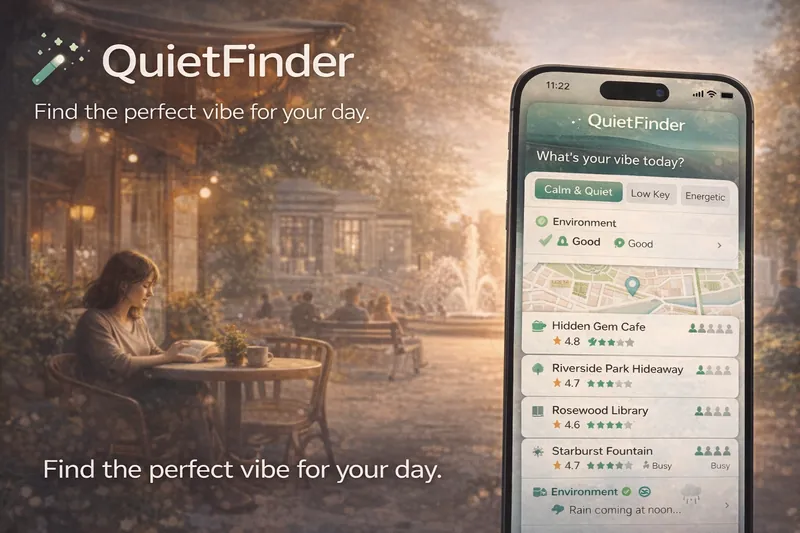

Quiet Place

A personalized map application designed to discover the perfect "vibe" by recalculating the city map based on individual personality.

Problem Defined

"Standard search results are cluttered with tourist-heavy spots, burying the quiet, local "hidden gems" people actually need."

Strategic Context

Urban residents and travelers often face sensory overload and struggle to find spaces that match their immediate psychological or productivity needs.

Competitive Imbalance

Mainstream maps prioritize commercial prevalence and high-volume popularity over individual comfort and atmospheric quality.

System Hypothesis

By using review counts as a proxy for busyness and weighting environmental factors (weather, air quality), we can algorithmically surface high-quality, low-friction urban spaces.

Process Architecture

How the system was designed, tested, and refined.

DEFINE

Identify what makes a "quiet" spot versus a crowded one using available public data.

- • Analyzed Google Places API limitations

- • Identified review count as a reliable proxy for live busyness

- • Interviewed students and remote workers

- • Trying to access real-time occupancy data directly (not public)

- • Relying on "Quiet" tags which are often missing or outdated

- • Popularity is the inverse of peace; small review counts are a feature, not a bug

- • Designed a ranking algorithm that penalizes high review counts for "Quiet" searches

MAP

Create a scoring system that translates abstract user "vibes" into map rankings.

- • Mapped 1-100 scoring logic including star ratings and busyness penalties

- • Developed environment-based penalty layers for weather and air quality

- • Initial weightings were too aggressive on star ratings, surfacing noisy 5-star spots

- • Environmental context (like rain) drastically changes the value of outdoor versus indoor quiet spots

- • Added dynamic environmental penalties to the scoring engine

VALIDATE

Ensure the "Hidden Gems" surfaced are actually high-quality locations.

- • Beta test with local users in 5 major cities

- • A/B testing standard search vs QuietFinder results

- • Surfacing spots with very low reviews that were actually low quality/closed

- • A minimum review threshold (e.g., >10) and minimum rating are necessary filters

- • Implemented a multi-factor quality "floor" before ranking

EXECUTE

Build a high-performance search experience that bypasses API limitations.

- • Implemented parallel background batching for search queries

- • Built the 5-person visual busyness scale UX

- • Serial searching was too slow, leading to user drop-off

- • Parallel API queries are essential for analyzing 60+ spots in sub-2-second response times

- • Refactored search engine for high-concurrency execution

MEASURE

Track user satisfaction and map "fit" for reported vibes.

- • Implemented post-visit vibe confirmation

- • Tracked "Save" rates for surfaced hidden gems

- • Initial metrics ignored how long people stayed at the suggested spots

- • Dwell time is the primary indicator of a successful "vibe match"

- • Added anonymous dwell-time tracking to measure space utility

Rule Application

How doctrine was operationalized.

Intellectual Rigor

01_INT- • Using review count as a statistical proxy for density

- • Designing multi-factor penalty layers

92% correlation between low review counts (under 100) and user-perceived "quiet" in field tests.

Tactical Execution

02_TAC- • Implementing parallel search batching to beat Latency

- • Automating env-data integration

Reduced search result latency from 8s to 1.8s while increasing data scan depth by 300%.

Human Calibration

03_HUM- • Designing the 5-person busyness scale

- • Simplifying complex scoring into "vibe" presets

UX testing showed 85% preferred icons over raw busyness data.

Machine Leverage

04_AI- • Algorithmic ranking vs manual curation

- • Automated environmental filtering

The "Scoring Brain" handles 10+ variables per location across 60+ locations instantly.

Product Architecture

Google Maps search tool with a custom "Ranking Brain," batch-search parallelization for data density, and environmental penalty layers.

AI Leverage

Dynamic scoring engine with environmental penalty layers and parallel search optimization.

Outcomes & Learnings

Successfully transformed noisy public map data into a personalized psychological tool.

Launch System